Connected and automated vehicles (CAVs) signal the start of a transformative era in the automotive industry. These technologies are anticipated to support safer, more accessible, cost-effective, and immersive mobility services while disrupting traditional business models. At a macro level, the industry is working to address legal and legislative challenges and encourage social acceptance. Simultaneously, it attempts to resolve core technological issues, both on-board and off-board the AVs. Within AVs, today’s focus is on improving the software stack’s detection, classification, path planning, and motion control modules while also securing the potential cyber vulnerabilities that may arise due to the increased electronic content. The off-board and on-board computing aspects of autonomous development present new challenges for OEMs and suppliers. Autonomous developers need the large storage, high-performance computing, and deep learning capacity that the cloud provides. Integrated with on-board edge computing, it can provide real-time compute and machine learning (ML) inference as well as data reduction to decrease bandwidth loads.

Even as the industry adjusts to the changes caused by COVID-19, it needs to address the following multidimensional challenges related to autonomous development:

- Hyperscaling: Compute and data management issues with respect to the significant amounts of data ingestion, storage, and processing required

- Agility and Speed: Ability to reduce software development and validation costs to enable faster time to market

- Cost and Safety: Lack of AV expertise, infrastructure (on-premise compute, large scale computing, data storage, etc.) costs, and human capital investments. Functional safety and fail-safe methodologies as decision-making is transferred from the driver to the vehicle.

- Ecosystem and Software-defined: Addition of new software from various 3rd party vendors/ partners leading to issues with interoperability of workloads which, in turn, cause integration and testing concerns

- Compliance: The need for global compliance in security and data privacy.

Over the last five years, OEMs and investment firms have spent several millions of dollars developing the building blocks of autonomous driving. However, an important element in establishing these services is the integration of cloud infrastructure and software deployment. Trials of specific use cases and pilots notwithstanding, progress towards building systems with the vision of scaling them towards full-fledged service solutions remains limited.

AWS’s Two-pronged Approach towards AV Deployment Strategies

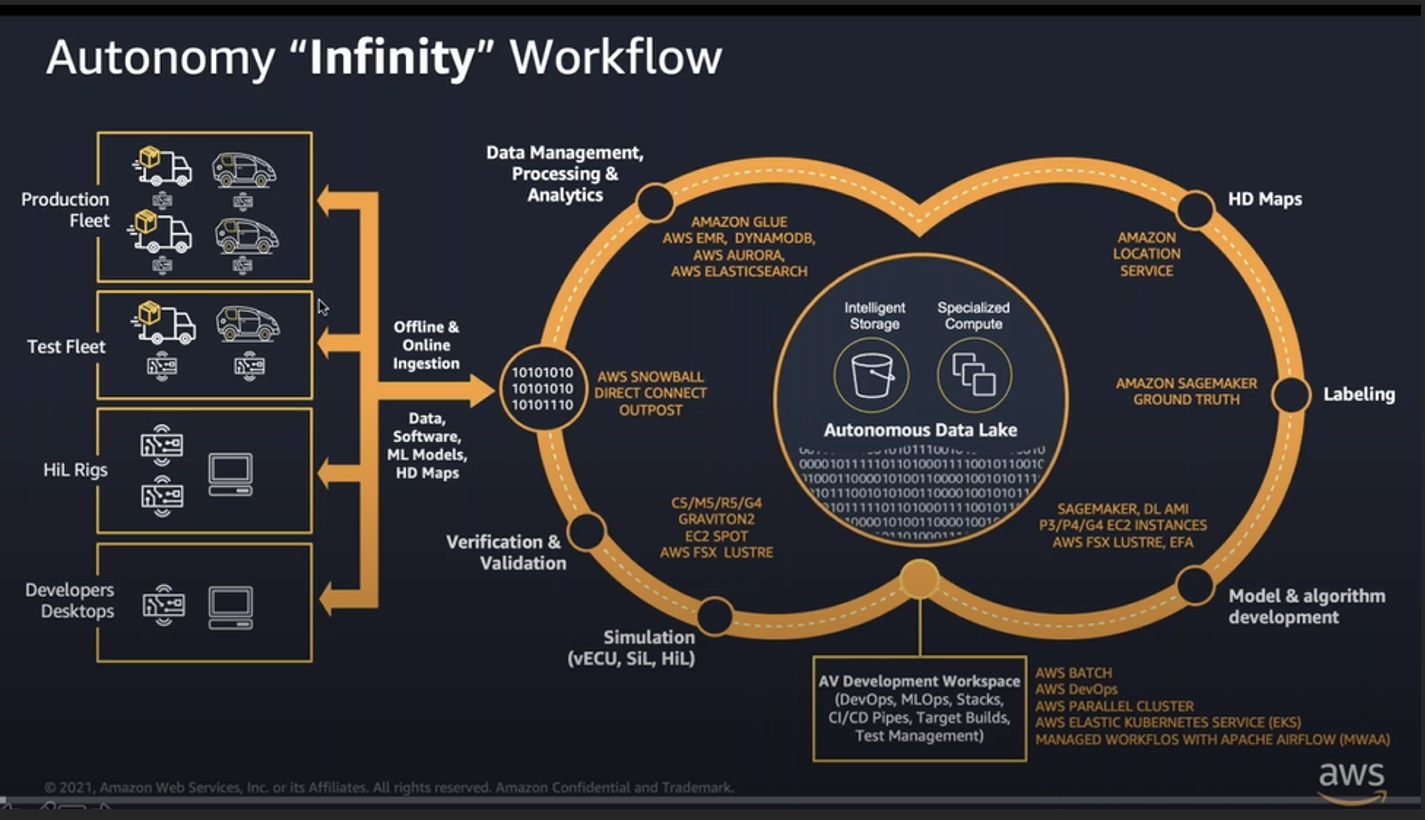

The ability to continually build, train, simulate, and test is essential to improving the accuracy of perception and path planning models. AWS addresses these challenges with a suite of solutions and tools— classified as “Infinite Loop” and “Big Loop”—across the entire AV development cycle.

The “Infinite Loop” Approach: This integrated holistic approach centers on the infinity workflow, i.e., a process that never ends but continues to evolve and improvize. This loop consists of five significant steps where AWS and its partner network provide crucial services that become the essential building blocks in customers’ successful AV deployment journeys.

Exhibit 1: The Five Steps of the AWS “Infinity” Workflow

- Data Management, Processing, and Analysis

A typical test vehicle generates at least 10-120 TB of data during a 6-8 hour drive. The massive data sets are transferred online to AWS data centers using Direct Connect, S3 Transfer Acceleration/Kinesis Data Firehose/Data sync services. When data volumes are unlimited, they can be transferred physically using AWS’s Snowball family of devices that offer physical storage with computing capabilities. However, it’s not just about storing data, there is also a cost component based on the frequency with which data needs to be accessed. Tiered services, such as the S3 glacier deep archive for infrequent data access, support customers with cost optimization. In addition, the recently created AV data lake v2 has deep constructs that target specific customer needs, becoming the central part of any organizations’ data strategy. V2 is an MDF4/Rosbag-based data ingestion and processing pipeline that allows data scientists/developers to access data with their choice of analytic tools/frameworks and draw insights from ML-based models.

- Labeling

Labeling is a tedious, costly process, massive in scale, and requires very high accuracy. Unfortunately, not all labeling can happen automatically, necessitating a human labeler. Sagemaker Ground Truth provides tools to support human labelers across 3D point cloud, video, and image data sets received from the customers. A typical customer can choose their own workforce to label or leverage AWS’s partner network to support this process with a “pay-as-you-go” option. For 2D models, the company also offers auto-labeling services where an active learning model is trained using human-labeled data sets.

- Model and Algorithm Development

Complex simultaneous simulations require large compute (CPU and GPU) capacity, which is expensive to build and lacks the flexibility to meet development timeline pressures. It also requires internal expertise, which is lacking across several automakers and suppliers. AWS provides this service with the option to create an AV ML stack through Sagemaker. In addition, AWS provides various types of instances with different configurations of CPU, memory, storage, and networking resources to suit user needs. EC2 instance is a virtual server that can serve as a practically unlimited set of virtual machines. Each type is available in different sizes to address specific workload requirements. For example, P3/P4 uses the latest NVIDIA GPUs and is designed for HPC and large distributed ML training jobs. Graviton 2 is AWS’s ARM-based, CPU-based instance. It offers 30% performance improvement as well as significant cost optimization, which is the foundation for environmental parity.

Toyota Research Institute (TRI-AD) is one of many examples where Amazon’s EC2 P3 instances were used to reduce the time taken to train ML models by 75%. This has allowed TRI-AD to incorporate new data sets to retrain models and introduce new features.

- Simulation and Verification &Validation (V&V)

As the auto industry is highly regulated, ISO 26262 V-Model prescribes methodologies to mitigate safety risks across automotive applications. This pattern is reflected in most AV development projects where hardware in the loop (HiL) simulation is required for much of system-level testing and validation phases on the right side of the V-Model. AWS provides several services to support this process via open and closed-loop workflows. A typical perception module uses an open-loop workflow, whereas the planning/control module uses a closed-loop workflow.

Mobileye is one of the several companies that leverage EC2 Spot and AWS batch to run large-scale simulations that allow it to scale up/down AV workloads flexibly.

- AV Development Workspace

Last but not least is the need to provide a holistic view of all the running pipelines and jobs to architects developing workflows while overcoming challenges such as disparate data sources based on specific use cases, cleansed data, and downstream consumption preparation. Amazon Managed Workflows for Apache Airflow (MWAA) environment enables end-to-end data pipelines to be set up and operated in the cloud at scale. In Continental Automotive Edge (CAEdge), AWS provides an example of a virtual workbench that offers toolchains to develop, supply, and maintain software-intensive system functions. These toolchains also provide access to the AWS Partner Network (APN) tools, which are well drawn out in the workspace to support data scientists and developers to do their jobs efficiently.

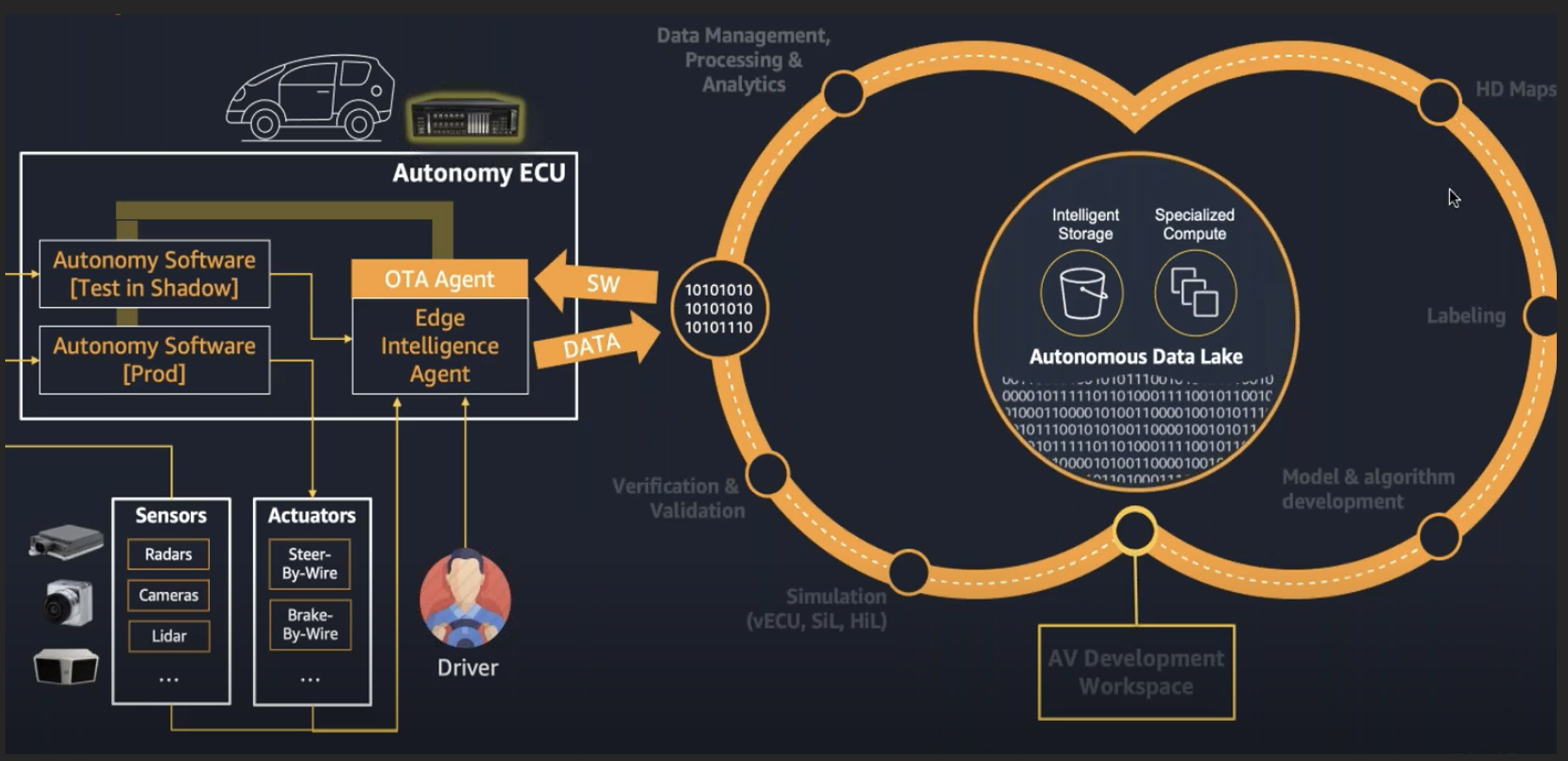

The “Big Loop” Approach

The “Big Loop” approach showcases how software assets prepared through the “Infinite Loop” approach can be deployed in shadow mode or through an edge intelligent agent.

Exhibit 2: AWS “Big Loop” Workflow

In strategic partnership with AWS, BlackBerry has co-developed the Blackberry IVY platform to offer wide-ranging expertise in the automotive cloud. AWS has successfully taken its massive portfolio down to the edge and expanded its portfolio of cloud features. Software is deployed through over-the-air (OTA) agents across the autonomous ECU, where it is not just the software in production but a test version that will run in parallel, which is called the shadowing mode. The edge intelligent agent will be able to compare the performance of the production software with the software under test and highlight if there is an agreement/disagreement between these versions of the software, and then send it back online via the infinite loop. The increase in vehicle edge intelligence will increase the ability to capture more pertinent information, which, in turn, will improve the overall competency of the system.

Through this process, AWS brings insights closer to the edge, enabling seamless deployments through OTA updates, thereby resulting in a continuous reinvention of the customer experience. Instead of exhausting data to the cloud, the data required can be applied close to the edge in an organized way, saving expensive data transmission costs, reducing incompatible niche point solutions, and supporting better scaling services. AWS brings all this together through its IoT Greengrass, service (i.e., cloud management, analytics, and storage) that extends functionality on the edge. Recently at AWS re: Invent 2021, the company announced IoT FleetWise, where automakers can easily collect, organize and standardize data in any format present in their vehicles for easy data analysis in the cloud. This service supports automakers by using intelligent filtering capabilities that allow developers to reduce network traffic.

In addition, this “Big Loop” does not move data but moves software, with this software requiring parity from a software-defined vehicle (SDV) perspective. While several automotive ecosystem participants tend to develop software services in the cloud and then deploy it on the edge, there is a difference in the software architecture as seen, for example, in the Intel processor in the cloud with Arm processor in the vehicle or vice versa. This difference in software architecture is expected to create additional steps such as cross-compilation, which are highly error-prone. In partnership with Arm, AWS will now be able to achieve environmental parity between software executions in the cloud and on the embedded edge, which is one of their important strategies for AV deployment. This environment parity will help achieve two very vital objectives:

- The ability to deploy bit-perfectly equal binaries between cloud and the vehicle edge, leveraging instruction set parity because in both places – cloud and vehicle edge – there is an Arm 64 architecture

- On the other side, AWS can use cloud to host most real automotive applications. For example, running auto-grade software in the cloud natively means V&V activities can be performed at scale with native properties, a development that has profound implications.

AWS is one of the co-founding members of SOAFEE, an industry initiative to extend cloud-native software experiences to automotive workloads. It includes an open-source reference implementation to enable commercial and non-commercial offerings. AWS presented this idea at the Arm DevSummit workshop, showcasing real-world examples. This has led to the company creating a parity-enabled SDV ecosystem.

Conclusion: What This Means for the Auto Industry

While today the technology maturity curve for autonomous driving is still nascent, key challenges centered on complex driving scenarios are being addressed by improving the fidelity of sensors, enhancing perception through ML, and developing overall infrastructure. Frost & Sullivan believes that such accelerated improvements in capabilities have resulted in the need for new technology support systems that draw heavily on cloud and embedded edge-based solutions.

As AV development progresses from the pilot phase to commercial deployment, developers will need to look for cost-effective yet rapidly scalable solutions that meet data ingestion and compute demands. Along the software development life cycle of AV applications, cloud and infrastructure support partners like AWS and the AWS Partner Network (APN) play a key role in addressing various challenges related to data handling. Over the next decade, AV developers will face significant pressure to reduce costs and achieve profitability. The ability to scale efficiently and the need to invest financial resources wisely will be vital to sustaining autonomous business models. From the S3 and the EC2 P3 to its parity-enabled SDV solution, AWS provides a complete data handling platform for AV developers allowing them to realize scale cost-effectively. This underlines AWS as an integral partner in AV deployment.